It’s been quiet here for the past few months, and I suspect some of you might have thought I’d abandoned ship. The truth is rather different. I haven’t stopped engaging with AI in education – quite the opposite. I’ve been so deeply immersed in the work that writing about it became just one more thing on an already groaning to-do list.

The Work Behind the Silence

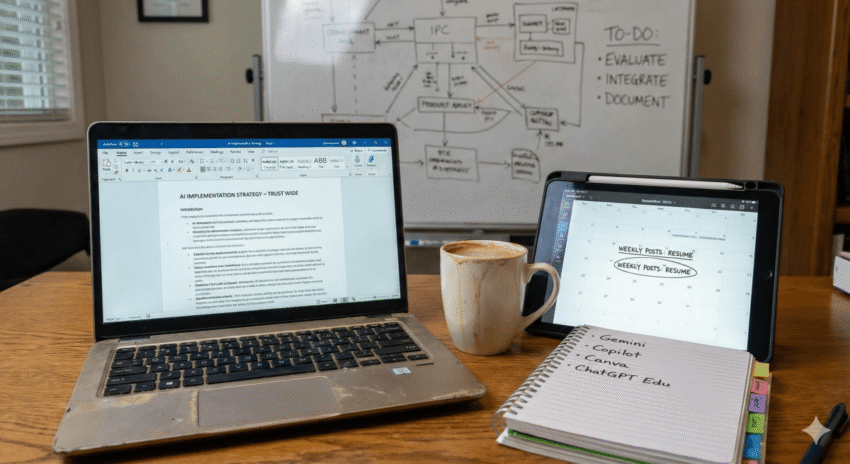

Since September, I’ve been developing AI implementation strategies across our entire trust. This wasn’t part of any official role expansion, just a case of carving out time where none really existed to address something that clearly needed attention. The work has been intensive, revealing both effective strategies and unexpected challenges. There’s much to unpack from this experience, and subsequent posts will explore what I’ve learned about rolling out AI literacy and integration at scale.

What strikes me most is how this mirrors a pattern I’ve seen repeatedly in education: those most engaged with emerging practices often find themselves stretched between doing the work and documenting it. The two rarely happen simultaneously.

A Remarkable Quarter for Educational AI

Looking back at the period from September to early December, I’d argue we’ve witnessed the most significant developments in AI and education since I started writing about this field. Not necessarily the flashiest announcements or the most hyped releases, but rather a convergence of practical educational tools reaching genuine maturity.

Consider what’s landed in these few months. Gemini has seen substantial updates. ChatGPT has evolved considerably, with a specific educational version now available. Google has woven Gemini’s capabilities into Classroom environments. Notebook LM has continued its evolution. Microsoft, after what felt like a prolonged period of deliberation, has finally begun integrating Copilot more meaningfully into its educational software suite. Their partnership with Anthropic to power Copilot represents a genuine step forward, particularly for those of us working within Microsoft-dominated educational environments.

Then there’s Canva, which has unleashed such a torrent of updates that properly exploring their capabilities would constitute a full-time job in itself. The fact that Canva remains committed to providing free access to educators worldwide makes these developments particularly significant.

The Challenge of Keeping Pace

The truth is the pace of development has become genuinely overwhelming. Not in a “this is exciting” way, but in a “how do we possibly evaluate and implement all of this responsibly” way. Each update, each new feature, each platform evolution requires careful consideration before bringing it into educational contexts. We need to understand not just what these tools can do, but what they should do in our specific settings.

This creates a tension between staying current and staying thoughtful. Between documenting developments and actually implementing them. Between sharing insights and protecting the time needed to develop those insights in the first place.

Moving Forward

I’m going to try to commit to weekly posts from this point forward. Not the occasional burst of multiple posts that characterised my earlier approach, but a more sustainable rhythm that acknowledges the realities of working in education whilst trying to make sense of rapidly evolving technology.

The immediate posts will cover the trust-wide work I’ve been doing, exploring both successes and stumbling blocks. What strategies have worked when introducing AI tools to colleagues at various stages of digital confidence? How do you balance enthusiasm for potential with appropriate caution about limitations? What does meaningful AI integration actually look like when you move beyond pilot projects and individual classrooms?

A Brief Apology

To those who’ve followed this blog from its early days, I owe an apology for the silence. I know consistency matters when you’re trying to make sense of a moving target, and my absence hasn’t helped anyone navigate these waters. The irony isn’t lost on me that I’ve been too busy doing AI work to write about AI work.

But perhaps that’s appropriate. This field demands practitioners, not just commentators. It needs people willing to test these tools in real classrooms with real students facing real constraints. The writing matters, but only insofar as it serves the practice.

What’s Actually Changed

Looking back over these months, here’s what feels genuinely different. We’ve moved from experimental AI tools that occasionally worked in educational contexts to educational AI tools that mostly work. The distinction matters enormously. We’re no longer trying to retrofit consumer products for classroom use. We’re working with platforms that have educational use cases built into their design from the start.

This doesn’t mean these tools are perfect or that their implementation is straightforward. It means the conversation has shifted from “could this possibly work in education?” to “how do we make this work well in our specific educational context?” That shift, subtle as it might seem, represents genuine progress.

The Path Ahead

Normal service resumes here – well, that’s the idea at least – though normality itself feels like an increasingly elusive concept in educational technology. Perhaps that’s the point. We’re not returning to normal. We’re finding a new rhythm that acknowledges both the pace of technological change and the enduring realities of educational practice.

The ride continues. Let’s see where it takes us.